This section is dedicated to the subset of machine learning that makes prior assumptions on parameters. Before we explain how Bayes’ theorem can be applied to simple building blocks in machine learning, we introduce some notations and concepts in the subsection below. Good references for Bayesian analysis are Gelman et al. (2013) and Kruschke (2014). The latter, like the present book, illustrates the concepts with many lines of R code. Bayesian inference is used in Feng & He (2023) to estimate conditional expected returns and residual covariance matrices with a view to portfolio choice.

1The Bayesian framework¶

Up to now, the models that have been presented rely on data only. This approach is often referred to as ‘frequentist’. Given one dataset, a frequentist will extract (i.e., estimate) a unique set of optimal parameters and consider it to be the best model. Bayesians, on the other hand, consider datasets as snapshots of reality and, for them, parameters are thus random! Instead of estimating one value for parameters (e.g., a coefficient in a linear model), they are more ambitious and try to determine the whole distribution of the parameter.

In order to outline how that can be achieved, we introduce basic notations and results. The foundational concept in Bayesian analysis is the conditional probability. Given two random sets (or events) and , we define the probability of knowing (equivalently, the odds of having , conditionally on having ) as

that is, the probability of the intersection between the two sets divided by the probability of . Likewise, the probability that both events occur is equal to . Given disjoint events , such that , then for any event , the law of total probabilities is (or implies)

Given this expression, we can formulate a general version of Bayes’ theorem:

(eq:bayes)

Endowed with this result, we can move forward to the core topic of this section, which is the estimation of some parameter (possibly a vector) given a dataset, which we denote with thereby following the conventions from Gelman et al. (2013). This notation is suboptimal in this book nonetheless because in all other chapters, stands for the label of a dataset.

In Bayesian analysis, one sophistication (compared to a frequentist approach) comes from the fact that the data is not almighty. The distribution of the parameter will be a mix between some prior distribution set by the statistician (the user, the analyst) and the empirical distribution from the data. More precisely, a simple application of Bayes’ formula yields

(eq:bayes2)

The interpretation is immediate: the distribution of knowing the data is proportional to the distribution of times the distribution of knowing . The term is often omitted because it is simply a scaling number that ensures that the density sums or integrates to one.

We use a slightly different notation between Equation eq:bayes and Equation eq:bayes2. In the former, denotes a true probability, i.e., it is a number. In the latter, stands for the whole probability density function of or .

The whole purpose of Bayesian analysis is to compute the so-called posterior distribution via the prior distribution and the likelihood function . Priors are sometimes qualified as informative, weakly informative or uninformative, depending on the degree to which the user is confident on the relevance and robustness of the prior. The simplest way to define a non-informative prior is to set a constant (uniform) distribution over some realistic interval(s).

The most challenging part is usually the likelihood function. The easiest way to solve the problem is to resort to a specific distribution (possibly a parametric family) for the distribution of the data and then consider that obsevations are i.i.d., just as in a simple maximum likelihood inference. If we assume that new parameters for the distributions are gathered into , then the likelihood can be written as

(eq:likelihood) but in this case the problem becomes slightly more complex because adding new parameters changes the posterior distribution to . The user must find out the joint distribution of and - given . Because of their nested structure, these models are often called hierarchical models.

Bayesian methods are widely used for portfolio choice. The rationale is that the distribution of asset returns depends on some parameter and the main issue is to determine the posterior distribution. We very briefly review a vast literature below. Bayesian asset allocation is investigated in Lai et al. (2011) (via stochastic optimization), Guidolin & Liu (2016) and Dangl & Weissensteiner (2020). Shrinkage techniques (of means and covariance matrices) are tested in Frost & Savarino (1986), Kan & Zhou (2007) and DeMiguel et al. (2015). In a similar vein, Tu & Zhou (2010) build priors that are coherent with asset pricing theories. Finally, Bauder et al. (2020) sample portfolio returns which allows to dervive a Bayesian optimal frontier. We invite the interested reader to also dwelve in the references that are cited within these few articles.

2Bayesian sampling¶

2.1Gibbs sampling¶

One adjacent field of applications of Bayes’ theorem is simulation. Suppose we want to simulate the multivariate distribution of a random vector given by its density . Often, the full distribution is complex, but its marginals are more accessible. Indeed, they are simpler because they depend on only one variable (when all other values are known):

where we use the compact notation for all variables except . One way to generate samples with law is the following and relies both on the knowledge of the conditionals and on the notion of Markov Chain Monte Carlo, which we outline below. The process is iterative and assumes that it is possible to draw samples of the aforementioned conditionals. We write for the sample of the variable (). The simulation starts with a prior (or fixed, or random) sample . Then, for a sufficiently large number of times, say , new samples are drawn according to

The important detail is that after each line, the value of the variable is updated. Hence, in the second line, is sampled with the knowledge of and in the last line, all variables except have been updated to their state. The above algorithm is called Gibbs sampling. It relates to Markov chains because each new iteration depends only on the previous one.

Under some technical assumptions, as increases, the distribution of converges to that of . The conditions under which the convergence occurs have been widely discussed in series of articles in the 1990s. The interested reader can have a look for instance at Tierney (1994), Roberts & Smith (1994), as well as at section 11.7 of Gelman et al. (2013).

Sometimes, the full distribution is complex and the conditional laws are hard to determine and to sample. Then, a more general method, called Metropolis-Hastings, can be used that relies on the rejection method for the simulation of random variables.

2.2Metropolis-Hastings sampling¶

The Gibbs algorithm can be considered as a particular case of the Metropolis-Hastings (MH) method, which, in its simplest version, was introduced in Metropolis & Ulam (1949). The premise is similar: the aim is to simulate random variables that follow with the ability to sample from a simpler form which gives the probability of the future state , given the past one .

Once an initial value for has been sampled (), each new iteration () of the simulation takes place in three stages:

generate a candidate value from ,

compute the acceptance ratio

pick with probability or stick with the previous value () with probability .

The interpretation of the acceptance ratio is not straightforward in the general case. When the sampling generator is symmetric (), the candidate is always chosen whenever . If the reverse condition holds (), then the candidate is retained with odds equal to , which is the ratio of likelihoods. The more likely the new proposal, the higher the odds of retaining it.

Often, the first simulations are discarded in order to leave time to the chain to converge to a high probability region. This procedure (often called ‘burn in’) ensures that the first retained samples are located in a zone that is likely, i.e., that they are more representative of the law we are trying to simulate.

For the sake of brevity, we stick to a succinct presentation here, but some additional details are outlined in section 11.2 of Gelman et al. (2013) and in chapter 7 of Kruschke (2014).

3Bayesian linear regression¶

Because Bayesian concepts are rather abstract, it is useful to illustrate the theoretical notions with a simple example. In a linear model, and it is often statistically assumed that the are i.i.d. and normally distributed with zero mean and variance . Hence, the likelihood of Equation eq:likelihood translates into

In a regression analysis, the data is given both by and by , hence both are reported in the notations. Simply acknowledging that , we get

In the last line, the second term is a function of the difference , where . This is not surprising: is a natural benchmark for the mean of . Moreover, introducing yields a relatively simple form for the probability.

The above expression is the frequentist (data-based) block of the posterior: the likelihood. If we want to obtain a tractable expression for the posterior, we need to find a prior component that has a form that will combine well with this likelihood. These forms are called conjugate priors. A natural candidate for the right part (that depends on both b and ) is the multivariate Gaussian density:

(eq:linprior) where we are obliged to condition with respect to . The density has prior mean and prior covariance matrix . This prior gets us one step closer to the posterior because

In order to fully specify the cascade of probabilities, we need to take care of and set a density of the form

(eq:linsig) which is close to that of the left part of eq:linlike. This corresponds to an inverse gamma distribution for the variance with prior parameters and (this scalar notation is not optimal because it can be confused with the prior mean so we must pay extra attention).

Now, we can simplify with eq:linlike, eq:linprior and eq:linsig:

which can be rewritten

The above expression is simply a quadratic form in and it can be rewritten after burdensome algebra in a much more compact manner:

(eq:linpost)

where

This expression has two parts: the Gaussian component which relates mostly to , and the inverse gamma component, entirely dedicated to . The mix between the prior and the data is clear. The posterior covariance matrix of the Gaussian part () is the sum between the prior and a quadratic form from the data. The posterior mean is a weighted average of the prior and the sample estimator . Such blends of quantities estimated from data and a user-supplied version are often called shrinkages. For instance, the original matrix of cross-terms is shrunk towards the prior . This can be viewed as a regularization procedure: the pure fit originating from the data is mixed with some ‘external’ ingredient to give some structure to the final estimation.

The interested reader can also have a look at section 16.3 of Greene (2018) (the case of conjugate priors is treated in subsection 16.3.2).

The formulae above can be long and risky to implement. Luckily, there are Python packages that perform Bayesian inference for linear regression using conjugate priors. Below, we provide one example of how it works using PyMC. To simplify the code and curtail computation times, we consider two predictors: market capitalization (size anomaly) and price-to-book ratio (value anomaly). In statistics, the precision matrix is the inverse of the covariance matrix. In the parameters, the first two priors relate to the Gaussian law and the last two to the inverse gamma distribution:

where is the shape and is the scale.

from sklearn.svm import SVR

import numpy as np

import pandas as pd

import matplotlib.pyplot as plt

import seaborn as sns

import warnings

warnings.filterwarnings('ignore')

plt.style.use('seaborn-v0_8-whitegrid')

sns.set_palette("husl")

import pymc as pm

import arviz as az

# Building the data & import functions

from data_build import generate_data

data_ml, features, features_short, returns, stock_ids, stock_ids_short = generate_data()

features_short =["Div_yld", "EPS", "Size12m", "Mom_LT", "Ocf", "PB", "Vol_LT"]

separation_date = "2017-01-15"

data_ml_clean = data_ml.dropna(subset=(features_short + ['R1M']))

training_sample = data_ml_clean[data_ml['date'] <= separation_date]

testing_sample = data_ml_clean[data_ml['date'] > separation_date] # # Define prior parameters

prior_mean = np.array([0.01, 0.1, 0.1]) # Average value of parameters (prior)

prior_cov = np.diag(prior_mean**2) # Covariance matrix of parameters (prior)

# Prepare data for Bayesian regression: size (Mkt_Cap_3M_Usd) and value (Pb)

X_train = testing_sample[['Size12m', 'PB']].values

y_train = testing_sample['R1M'].values

# Build Bayesian linear model with PyMC

with pm.Model() as bayesian_lm:

# Priors for regression coefficients (intercept + 2 predictors)

beta = pm.MvNormal('beta', mu=prior_mean, cov=prior_cov, shape=3)

# Prior for noise variance (inverse gamma)

sigma = pm.InverseGamma('sigma', alpha=0.5, beta=0.5)

# Expected value of outcome

mu = beta[0] + beta[1] * X_train[:, 0] + beta[2] * X_train[:, 1]

# Likelihood (sampling distribution) of observations

y_obs = pm.Normal('y_obs', mu=mu, sigma=sigma, observed=y_train)

# Sample from posterior

trace = pm.sample(2000, return_inferencedata=True, progressbar=True)Initializing NUTS using jitter+adapt_diag...

Multiprocess sampling (4 chains in 4 jobs)

NUTS: [beta, sigma]

Sampling 4 chains for 1_000 tune and 2_000 draw iterations (4_000 + 8_000 draws total) took 27 seconds.

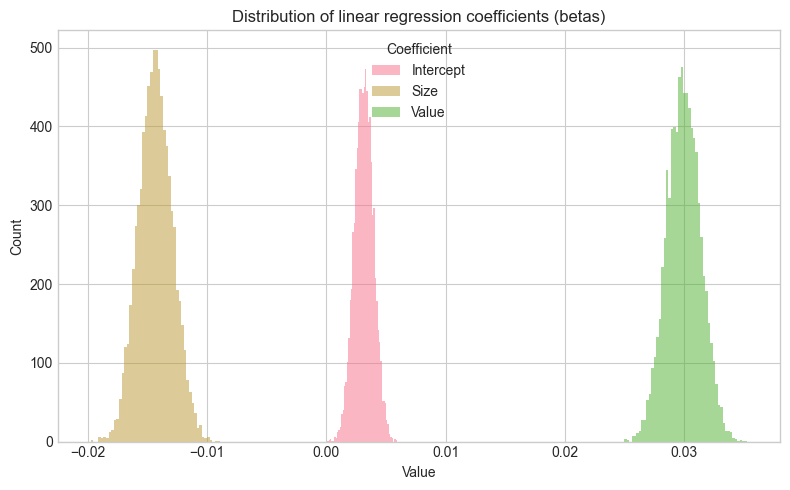

In the above specification, we must also provide a prior for the constant. By default, we set its average value to 0.01, which corresponds to a 1% average monthly return. Once the model has been estimated, we can plot the distribution of coefficient estimates.

# Extract posterior samples for beta coefficients

beta_samples = trace.posterior['beta'].values.reshape(-1, 3)

# Create DataFrame for plotting

beta_df = pd.DataFrame(beta_samples, columns=['Intercept', 'Size', 'Value'])

beta_melted = beta_df.melt(var_name='coefficient', value_name='value')

# Plot distribution of linear regression coefficients (betas)

fig, ax = plt.subplots(figsize=(8, 5))

for coef in ['Intercept', 'Size', 'Value']:

data = beta_df[coef]

ax.hist(data, bins=50, alpha=0.5, label=coef)

ax.set_xlabel('Value')

ax.set_ylabel('Count')

ax.legend(title='Coefficient')

ax.set_title('Distribution of linear regression coefficients (betas)')

plt.tight_layout()

plt.show()

The distribution of the constant in the figure is firmly to the right with a small dispersion, hence it is solidly positive. For the size coefficient, it is the opposite; it is negative (small firms are more profitable). With regard to value, it is hard to conclude, the distribution is balanced around zero: there is no clear exposition to the price-to-book ratio variable.

4Naive Bayes classifier¶

Bayes’ theorem can also be easily applied to classification. We formulate it with respect to the label and features and write

(eq:naivebayes) and then split the input matrix into its column vectors . This yields

(eq:naivebayes2)

The ‘naive’ qualification of the method comes from a simplifying assumption on the features.^friedman1997bayesian. If they are all mutually independent, then the likelihood in the above expression can be expanded into

(eq:naivebayes3)

The next step is to be more specific about the likelihood. This can be done non-parametrically (via kernel estimation) or with common distributions (Gaussian for continuous data, Bernoulli for binary data). In factor investing, the features are continuous, thus the Gaussian law is more adequate:

where is the value of the classes taken by and and are the standard error and mean of , conditional on being equal to . In practice, each class is spanned, the training set is filtered accordingly and and are taken to be the sample statistics. This Gaussian parametrization is probably ill-suited to our dataset because the features are uniformly distributed. Even after conditioning, it is unlikely that the distribution will be even remotely close to Gaussian. Technically, this can be overcome via a double transformation method. Given a vector of features with empirical cdf , the variable

(eq:transf) will have a standard normal law whenever is not pathological. Non-pathological cases are when the cdf is continuous and strictly increasing and when observations lie in the open interval (0,1). If all features are independent, the transformation should not have any impact on the correlation structure. Otherwise, we refer to the literature on the NORmal-To-Anything (NORTA) method (see, e.g., Chen (2001) and Coqueret (2017)).

Lastly, the prior in Equation eq:naivebayes3 is often either taken to be uniform across the classes ( for all ) or equal to the sample distribution.

We illustrate the naive Bayes classification tool with a simple example. While the scikit-learn library embeds such a classifier (GaussianNB), we show how to use it below. Since the features are uniformly distributed, the transformation in eq:transf amounts to applying the Gaussian quantile function (inverse cdf).

For visual clarity, we only use the small set of features.

from sklearn.naive_bayes import GaussianNB

# Prepare training features (without transformation)

X_train_NB = training_sample[features_short].values

y_train_NB = training_sample['R1M_C'].values

# Fit basic Naive Bayes classifier

fit_NB_basic = GaussianNB()

fit_NB_basic.fit(X_train_NB, y_train_NB)

# Display model parameters

print(f"Naive Bayes model fitted with {len(features_short)} features")

print(f"Training samples: {len(training_sample)}")

print(f"Class prior probabilities: {fit_NB_basic.class_prior_}")Naive Bayes model fitted with 7 features

Training samples: 139178

Class prior probabilities: [0.50025148 0.49974852]

from sklearn.naive_bayes import GaussianNB

from scipy.stats import norm

# Transform features using inverse Gaussian CDF (for uniformly distributed features)

def transform_features(data, features):

"""Apply Gaussian quantile transformation to features."""

X = data[features].values

X_clipped = np.clip(X, 0.0001, 0.9999)

X_transformed = norm.ppf(X_clipped)

return X_transformed

# Transform training features

gauss_features_train = transform_features(training_sample, features_short)

# Fit Naive Bayes classifier

fit_NB_gauss = GaussianNB()

fit_NB_gauss.fit(gauss_features_train, training_sample['R1M_C'])

print(f"Naive Bayes model fitted with {len(features_short)} features")

print(f"Training samples: {len(training_sample)}")Naive Bayes model fitted with 7 features

Training samples: 139178

The plots show the distributions of the features, conditionally on each value of the label. Essentially, those are the densities . For each feature, both distributions are very similar.

As usual, once the model has been trained, the accuracy of predictions can be evaluated.

# Transform test features

gauss_features_test = transform_features(testing_sample, features_short)

# Compute hit ratio

predictions = fit_NB_gauss.predict(gauss_features_test)

hit_ratio = np.mean(predictions == testing_sample['R1M_C'])

print(f"Hit ratio: {hit_ratio:.4f}")Hit ratio: 0.6024

The performance of the classifier is not satisfactory as it underperforms a random guess.

5Bayesian additive trees {#BART}¶

5.1General formulation¶

Bayesian additive regression trees (BARTs) are an ensemble technique that mixes Bayesian thinking and regression trees. In spirit, they are close to the tree ensembles seen in Chapter trees, but they differ greatly in their implementation. In BARTs like in Bayesian regressions, the regularization comes from the prior. The original article is Chipman et al. (2010) and the implementation (in R) follows Sparapani et al. (2019). BARTs have been used in Shu & Tiwari (2021) to identify which characteristics are priced. The authors report that stocks’ market capitalization is the only one that matters.

Formally, the model is an aggregation of models, which we write as

(eq:BART) where is a Gaussian noise with variance , and the are decision trees with structure and weights vectors . This decomposition of the tree is the one we used for boosted trees and is illustrated in the chapter on trees. codes all splits (variables chosen for the splits and levels of the splits) and the vectors correspond to the leaf values (at the terminal nodes).

At the macro-level, BARTs can be viewed as traditional Bayesian objects, where the parameters are all of the unknowns coded through , and and where the focus is set on determining the posterior

(eq:bartpost)

Given particular forms of priors for , the algorithm draws the parameters using a combination of Metropolis-Hastings and Gibbs samplers.

5.2Priors¶

The definition of priors in tree models is delicate and intricate. The first important assumption is independence: independence between and all other parameters and independence between trees, that is, between couples and for . This assumption makes BARTs closer to random forests in spirit and further from boosted trees. This independence entails

Moreover, it is customary (for simplicity) to separate the structure of the tree () and the terminal weights (), so that by a Bayesian conditioning

It remains to formulate the assumptions for each of the three parts.

We start with the trees’ structures, . Trees are defined by their splits (at nodes) and these splits are characterized by the splitting variable and the splitting level. First, the size of trees is parametrized such that a node at depth is nonterminal with probability given by

(eq:bartnode) The authors recommend to set and . This gives a probability of 5% to have 1 node, 55% to have 2 nodes, 28% to have 3 nodes, 9% to have 4 nodes and 3% to have 5 nodes. Thus, the aim is to force relatively shallow structures.

Second, the choice of splitting variables is driven by a generalized Bernoulli (categorical) distribution which defines the odds of picking one particular feature. In the original paper by Chipman et al. (2010), the vector of probabilities was uniform (each predictor has the same odds of being chosen for the split). This vector can also be random and sampled from a more flexible Dirichlet distribution. The level of the split is drawn uniformly on the set of possible values for the chosen predictor.

Having determined the prior of structure of the tree , it remains to fix the terminal values at the leaves (). The weights at all leaves are assumed to follow a Gaussian distribution , where is the center of the range of the label values. The variance is chosen such that plus or minus two times covers 95% of the range observed in the training dataset. Those are default values and can be altered by the user.

Lastly, for computational purposes similar to those of linear regressions, the parameter (the variance of in eq:BART) is assumed to follow an inverse Gamma law akin to that used in Bayesian regressions. The parameters are by default computed from the data so that the distribution of is realistic and prevents overfitting. We refer to the original article, section 2.2.4, for more details on this topic.

In sum, in addition to (number of trees), the prior depends on a small number of parameters: and (for the tree structure), and (for the tree weights) and and (for the noise term).

5.3Sampling and predictions¶

The posterior distribution in eq:bartpost cannot be obtained analytically but simulations are an efficient shortcut to the model eq:BART. Just as in Gibbs and Metropolis-Hastings sampling, the distribution of simulations is expected to converge to the sought posterior. After some burn-in sample, a prediction for a newly observed set will simply be the average (or median) of the predictions from the simulations. If we assume simulations after burn-in, then the average is equal to

The complex part is naturally to generate the simulations. Each tree is sampled using the Metropolis-Hastings method: a tree is proposed, but it replaces the existing one only under some (possibly random) criterion. This procedure is then repeated in a Gibbs-like fashion.

Let us start with the MH building block. We seek to simulate the conditional distribution

where and collect the structures and weights of all trees except for tree number . One tour de force in BART is to simplify the above Gibbs draws to

where is the partial residual on a prediction that excludes the tree.

The new MH proposition for is based on the previous tree and there are three possible (and random) alterations to the tree:

growing a terminal node (increase the complexity of the tree by adding a supplementary leaf);

pruning a pair of terminal nodes (the opposite operation: reducing complexity);

changing splitting rules.

For simplicity, the third option is often excluded. Once the tree structure is defined (i.e., sampled), the terminal weights are independently drawn according to a Gaussian distribution .

After the tree is sampled, the MH principle requires that it be accepted or rejected based on some probability. This probability increases with the odds that the new tree increases the likelihood of the model. Its detailed computation is cumbersome and we refer to section 2.2 in Sparapani et al. (2019) for details on the matter.

Now, we must outline the overarching Gibbs procedure. First, the algorithm starts with trees that are simple nodes. Then, a specified number of loops include the following sequential steps:

| Step | Task |

|---|---|

| 1 | sample $(q_1,\textbf{w}_1) \ |

| 2 | sample $(q_2,\textbf{w}_2) \ |

| ... | ...; |

| m | sample $(q_m,\textbf{w}_m) \ |

| ... | ...; |

| M | sample $(q_M,\textbf{w}_M) \ |

| M+1 | sample given the full residual |

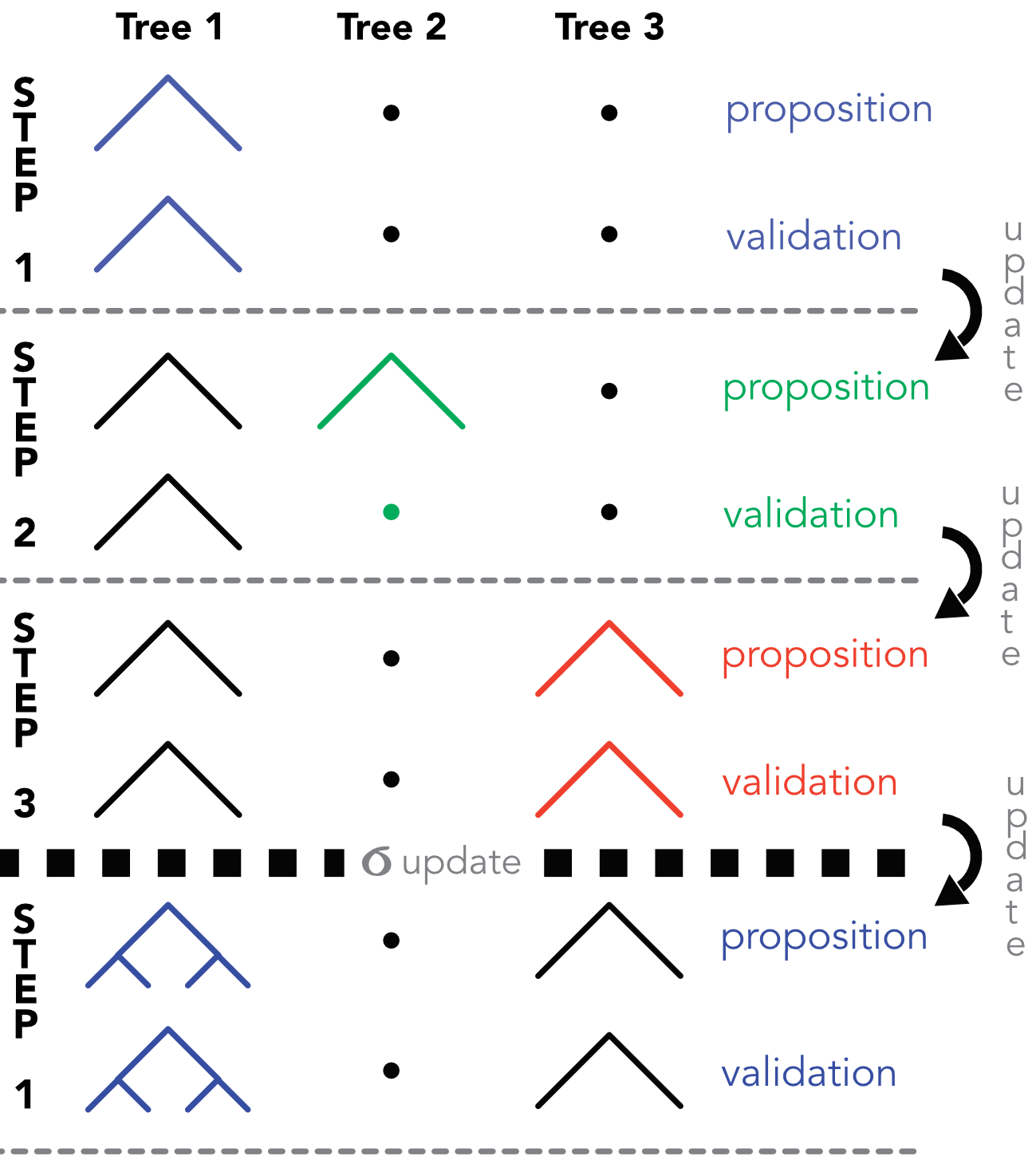

At each step , the residual is updated with the values from step . We illustrate this process in the diagram below in which . At step 1, a partition is proposed for the first tree, which is a simple node. In this particular case, the tree is accepted. In this scheme, the terminal weights are omitted for simplicity. At step 2, another partition is proposed for the tree, but it is rejected. In the third step, the proposition for the third is accepted. After the third step, a new value for is drawn and a new round of Gibbs sampling can commence.

Diagram of the MH/Gibbs sampling of BARTs. At step 2, the proposed tree is not validated.

5.4Code¶

There are several Python packages that implement BART methods. Below we use pymc-bart which integrates seamlessly with PyMC. We resort to only a few parameters, like m (number of trees ) and related hyperparameters. Nevertheless, the execution of the code takes time.

import pymc_bart as pmb

# Prepare data

X_train_bart = training_sample[features_short].values

y_train_bart = training_sample['R1M'].values

X_test_bart = testing_sample[features_short].values

# Fit BART model using PyMC-BART

with pm.Model() as bart_model:

X_shared = pm.Data('X', X_train_bart)

# BART prior for the mean function

mu = pmb.BART('mu', X=X_shared, Y=y_train_bart, m=20)

# Prior for noise

sigma = pm.HalfNormal('sigma', sigma=1)

# Likelihood

y_obs = pm.Normal('y_obs', mu=mu, sigma=sigma, observed=y_train_bart, shape=mu.shape)

# Sample from posterior

idata = pm.sample(100, tune=20, progressbar=True, random_seed=42)

# In-sample posterior predictive (training data)

pp_train = pm.sample_posterior_predictive(idata, random_seed=42)Multiprocess sampling (4 chains in 4 jobs)

CompoundStep

>PGBART: [mu]

>NUTS: [sigma]

Sampling 4 chains for 20 tune and 100 draw iterations (80 + 400 draws total) took 36 seconds.

The rhat statistic is larger than 1.01 for some parameters. This indicates problems during sampling. See https://arxiv.org/abs/1903.08008 for details

The effective sample size per chain is smaller than 100 for some parameters. A higher number is needed for reliable rhat and ess computation. See https://arxiv.org/abs/1903.08008 for details

Sampling: [y_obs]

Sampling ... ━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━ 100% 0:00:00 / 0:00:00━━━ 92% 0:00:01 / 0:00:00

Once the model is trained,^[In the case of BARTs, the training consists exactly in the drawing of posterior samples.] we evaluate its performance. We simply compute the hit ratio.

with bart_model:

pm.set_data({'X': X_test_bart})

pp_test = pm.sample_posterior_predictive(idata)

Sampling: [mu, y_obs]

Sampling ... ━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━ 100% 0:00:00 / 0:00:08[0m 99% 0:00:01 / 0:00:0800:01 / 0:00:07

y_pred_bart = pp_test.posterior_predictive["y_obs"].values

y_pred_bart = y_pred_bart.mean(axis=(0, 1))

# Compute hit ratio (correct sign prediction)

hit_ratio_bart = np.mean(y_pred_bart * testing_sample['R1M'].values > 0)

print(f"Hit ratio: {hit_ratio_bart:.4f}")Hit ratio: 0.5799

The performance seems reasonable but is by no means impressive. The data from all sampled trees is available in the fitted model. The simplest information we can extract is the evolution of the model’s residual standard deviation across iterations (approximating ).

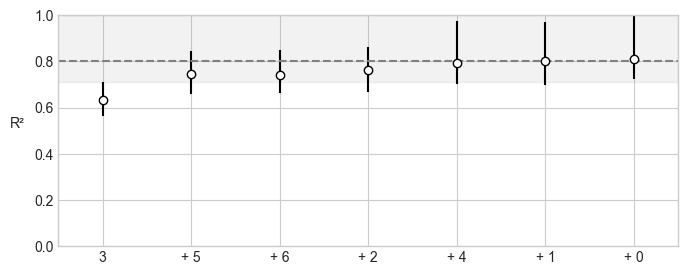

vi_results = pmb.compute_variable_importance(idata, mu, X_test_bart)

pmb.plot_variable_importance(vi_results)<Axes: ylabel='R²'>

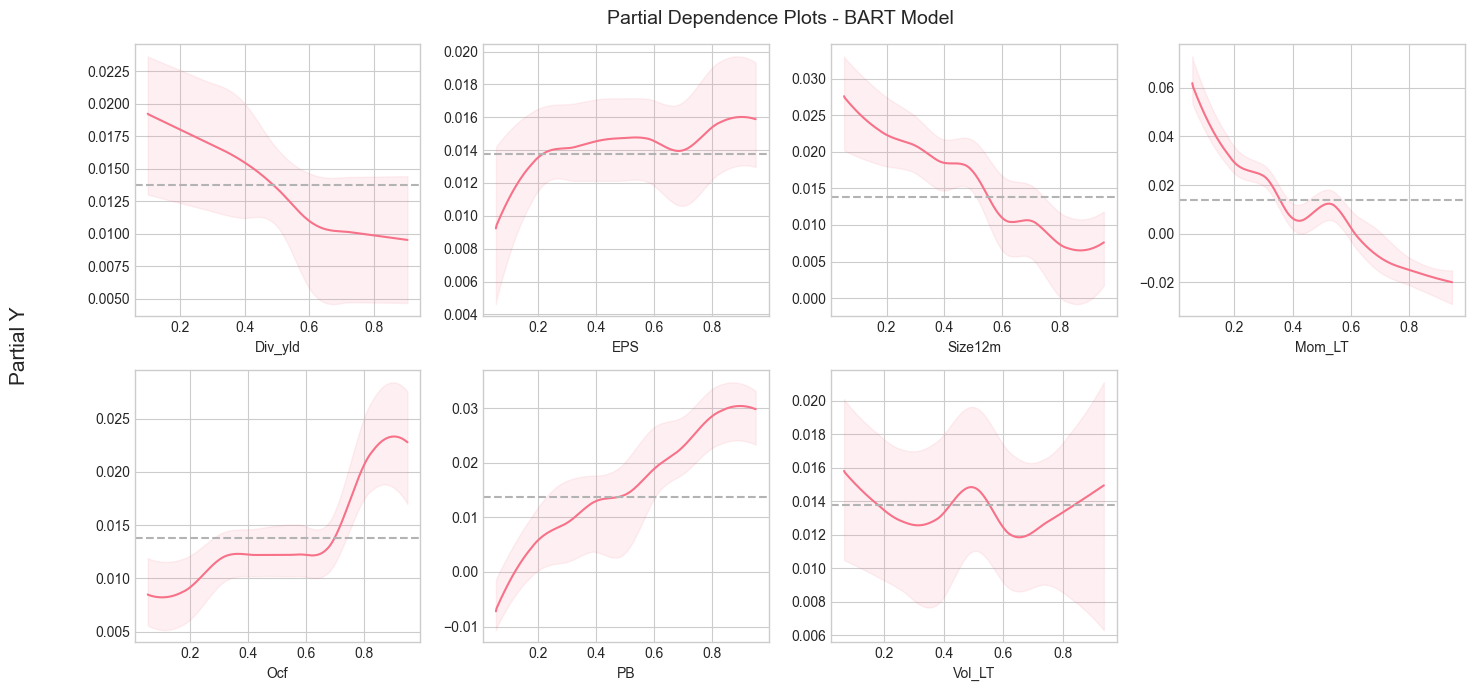

from pymc_bart.utils import plot_pdp

fig, axes = plt.subplots(2, 4, figsize=(14, 7))

axes = axes.flatten()

plot_pdp(bart_model['mu'], X=X_train_bart, Y=y_train_bart,

xs_interval='quantiles', grid=(2, 4), var_idx=list(range(len(features_short))),

var_discrete=None, func=None, samples=100, ax=axes[:len(features_short)])

for i, feat in enumerate(features_short):

axes[i].set_xlabel(feat)

axes[-1].axis('off') # Hide empty subplot

fig.suptitle('Partial Dependence Plots - BART Model', fontsize=14)

plt.tight_layout()

plt.show()

And we see that...

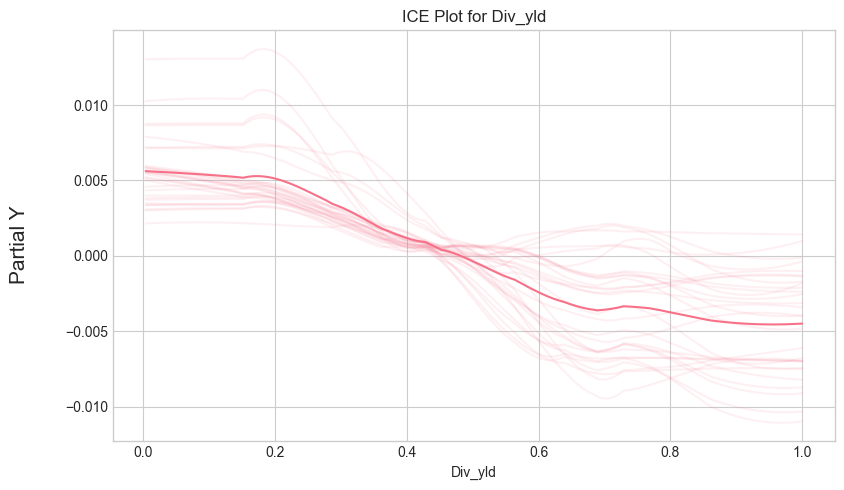

Individual Conditional Expectation (ICE) plots:

from pymc_bart.utils import plot_ice

fig, ax = plt.subplots(figsize=(8, 5))

plot_ice(bart_model['mu'], X=X_train_bart, Y=y_train_bart,

var_idx=[0], centered=True, samples=50, ax=ax)

ax.set_xlabel(features_short[0])

ax.set_title(f'ICE Plot for {features_short[0]}')

plt.tight_layout()

plt.show()

- Gelman, A., Carlin, J. B., Stern, H. S., Dunson, D. B., Vehtari, A., & Rubin, D. B. (2013). Bayesian Data Analysis, 3rd Edition. Chapman & Hall / CRC.

- Kruschke, J. (2014). Doing Bayesian Data Analysis: A tutorial with R, JAGS, and Stan (2nd Ed.). Academic Press.

- Feng, G., & He, J. (2023). Factor investing: A Bayesian hierarchical approach. Journal of Econometrics, 233(1), 1–20.

- Lai, T. L., Xing, H., Chen, Z., & others. (2011). Mean–variance portfolio optimization when means and covariances are unknown. Annals of Applied Statistics, 5(2A), 798–823.

- Guidolin, M., & Liu, H. (2016). Ambiguity aversion and underdiversification. Journal of Financial and Quantitative Analysis, 51(4), 1297–1323.

- Dangl, T., & Weissensteiner, A. (2020). Optimal Portfolios under Time-Varying Investment Opportunities, Parameter Uncertainty, and Ambiguity Aversion. Journal of Financial and Quantitative Analysis, 55(4), 1163–1198.

- Frost, P. A., & Savarino, J. E. (1986). An empirical Bayes approach to efficient portfolio selection. Journal of Financial and Quantitative Analysis, 21(3), 293–305.

- Kan, R., & Zhou, G. (2007). Optimal portfolio choice with parameter uncertainty. Journal of Financial and Quantitative Analysis, 42(3), 621–656.

- DeMiguel, V., Martı́n-Utrera, A., & Nogales, F. J. (2015). Parameter uncertainty in multiperiod portfolio optimization with transaction costs. Journal of Financial and Quantitative Analysis, 50(6), 1443–1471.

- Tu, J., & Zhou, G. (2010). Incorporating economic objectives into Bayesian priors: Portfolio choice under parameter uncertainty. Journal of Financial and Quantitative Analysis, 45(4), 959–986.

- Bauder, D., Bodnar, T., Parolya, N., & Schmid, W. (2020). Bayesian inference of the multi-period optimal portfolio for an exponential utility. Journal Of Multivariate Analysis, 175, 104544.

- Tierney, L. (1994). Markov chains for exploring posterior distributions. Annals of Statistics, 1701–1728.

- Roberts, G. O., & Smith, A. F. (1994). Simple conditions for the convergence of the Gibbs sampler and Metropolis-Hastings algorithms. Stochastic Processes And Their Applications, 49(2), 207–216.

- Metropolis, N., & Ulam, S. (1949). The Monte Carlo method. Journal of the American Statistical Association, 44(247), 335–341.

- Greene, W. H. (2018). Econometric analysis, Eighth Edition. Pearson Education.